Wisr AI Systems and Moneylab Enter into an MOU to Develop Agentic AI Cyber Platform Focused on Financial Services Risk

Security research shows an AI‑trained malware (using Qwen 2.5 LLM) can bypass Microsoft Defender ~8% of the time, while SRAM-trained variants struggle to reach 1%. Developed in just three months on a $1,600 budget, it demonstrates how reinforcement‑learning enables automated creation of evasive malware at scale. With Black Hat 2025 slated to expose its inner workings, this marks a major escalation in cybersecurity: adversaries are now using AI to automate and optimize attack craft. Responding demands higher fidelity detection models and adversarial ML defenses.

Wisr AI Systems Announces Alpha Launch of RiskAssure: Integrating Agentic AI for Instant Third-Party Risk and Compliance Analysis

Security research shows an AI‑trained malware (using Qwen 2.5 LLM) can bypass Microsoft Defender ~8% of the time, while SRAM-trained variants struggle to reach 1%. Developed in just three months on a $1,600 budget, it demonstrates how reinforcement‑learning enables automated creation of evasive malware at scale. With Black Hat 2025 slated to expose its inner workings, this marks a major escalation in cybersecurity: adversaries are now using AI to automate and optimize attack craft. Responding demands higher fidelity detection models and adversarial ML defenses.

Wisr CEO CEO to Speak at 2025 Information Technology Symposium on Agentic AI and Third-Party Risk Management

Security research shows an AI‑trained malware (using Qwen 2.5 LLM) can bypass Microsoft Defender ~8% of the time, while SRAM-trained variants struggle to reach 1%. Developed in just three months on a $1,600 budget, it demonstrates how reinforcement‑learning enables automated creation of evasive malware at scale. With Black Hat 2025 slated to expose its inner workings, this marks a major escalation in cybersecurity: adversaries are now using AI to automate and optimize attack craft. Responding demands higher fidelity detection models and adversarial ML defenses.

Wisr AI Among Canada’s Top 100 AI Companies

Security research shows an AI‑trained malware (using Qwen 2.5 LLM) can bypass Microsoft Defender ~8% of the time, while SRAM-trained variants struggle to reach 1%. Developed in just three months on a $1,600 budget, it demonstrates how reinforcement‑learning enables automated creation of evasive malware at scale. With Black Hat 2025 slated to expose its inner workings, this marks a major escalation in cybersecurity: adversaries are now using AI to automate and optimize attack craft. Responding demands higher fidelity detection models and adversarial ML defenses.

Proactive Defense: The UK’s Vulnerability Research Initiative as AI Security Blueprint

The UK’s National Cyber Security Centre (NCSC) established the Vulnerability Research Initiative (VRI) to expand vulnerability discovery, including plans to target AI-powered weaknesses. Recognizing the limitations of their internal team, NCSC will collaborate with third-party researchers to investigate both common and specialized tech vulnerabilities. The program will coordinate expert efforts, share findings with industry, and aim to proactively identify and mitigate risks before they’re exploited—particularly those tied to AI modules and supply-chain components.

The EU Code of Practice: Practical Governance for the AI-Governed Enterprise

The European Union published a voluntary Code of Practice for general-purpose AI, complementing its upcoming AI Act set to take effect August 2, 2025. Crafted by independent experts and stakeholders, the code emphasizes safety, transparency, security, and copyrighted content use. Signatories—potentially including major LLM providers—will benefit from reduced administrative burdens and clearer legal frameworks. The move signals the EU’s embrace of ‘secure-by-design’ AI while controlling risk, especially relevant for sectors managing sensitive data and vendor-produced AI systems.

Wisr AI Completes Acquisition of RiskAssure

Security research shows an AI‑trained malware (using Qwen 2.5 LLM) can bypass Microsoft Defender ~8% of the time, while SRAM-trained variants struggle to reach 1%. Developed in just three months on a $1,600 budget, it demonstrates how reinforcement‑learning enables automated creation of evasive malware at scale. With Black Hat 2025 slated to expose its inner workings, this marks a major escalation in cybersecurity: adversaries are now using AI to automate and optimize attack craft. Responding demands higher fidelity detection models and adversarial ML defenses.

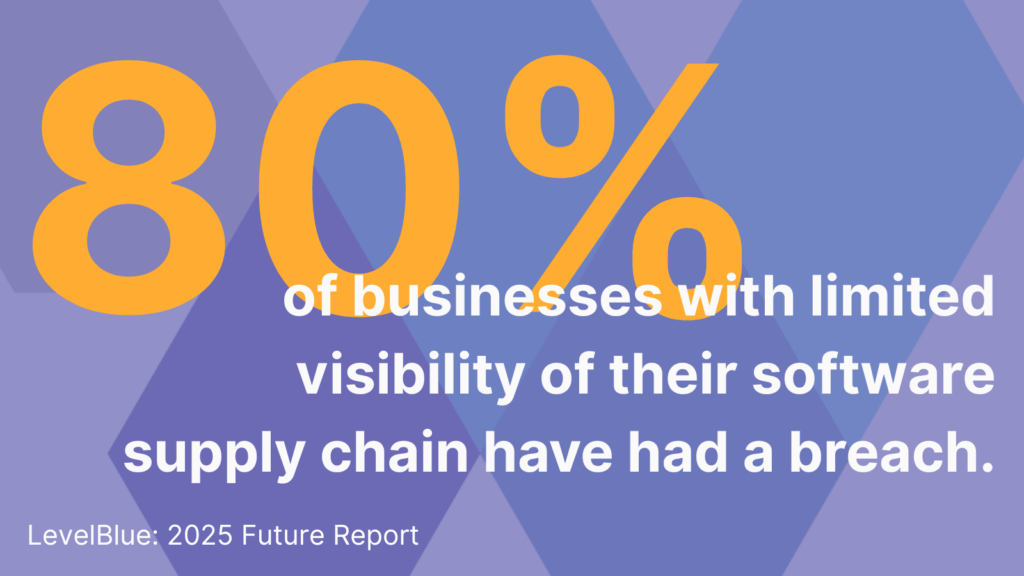

Supply Chain Invisibility: The Silent Breach Catalyst

A new LevelBlue report reveals that only 23% of enterprises have high visibility into their software supply chains—even though 40% of CEOs consider it their top security risk . Poor visibility correlates with breaches: 80% of under‑informed organizations suffered incidents in the past year, versus 6% for those with strong visibility. Emerging regulations (EU Cyber Resilience Act, U.S. SBOM mandates) are accelerating action, but risk remains. Firms must embrace software bills of materials and integrate AI‑driven monitoring to reduce third‑party exposure.

Deepfake Governance Attacks: A New Frontier of Trust Exploitation

Deepfake governance attacks are evolving social-engineering weapons. Cloned voices bypass human trust, exploiting familiarity & honor-based workflows. This isn’t just a government threat; CEOs face it too. We need new authentication for communications & decision-making to combat this rising risk. Trust must be earned—every time.

Zero‑Trust for AI-First Workplaces: Lessons from Zscaler

Traditional network approaches still rely on IP allowlists, VPN access, and perimeter security to implicitly trust users once inside. With distributed workforces and AI-integrated SaaS platforms, attackers can pivot horizontally once internal. Every user or AI agent becomes a potential threat vector. Removing implicit trust means protecting each identity, session, and API.